1M Denmark Email Lists: Business and Consumer Address

Emailproleads.com understands the importance of precision in marketing, which is why we offer the most comprehensive Denmark email list in the market. In the digital age, having access to a reliable and extensive email list is crucial for businesses and marketers aiming to reach their target audience effectively. With ourtop-notch services, you can access high-quality leads tailored to your specific needs.

Denmark, a picturesque country in Northern Europe, is home to a vibrant business landscape. To thrive in this competitive market, businesses need a targeted approach, and what better way than leveraging a Denmark email list? Emailproleads, a leading provider in the industry, offers a comprehensive solution tailored to your specific needs. In this article, we will explore the significance of a Denmark email list, address common queries, and guide you on where to buy the best lists in Denmark.

If you're on the hunt for a targeted and reliable email list in Denmark, look no further than Emailproleads.com. We specialize in providing top-notch Denmark email lists that cater to your specific marketing needs. Whether you're a budding entrepreneur or an established business, our Denmark email lists can significantly enhance your outreach efforts.

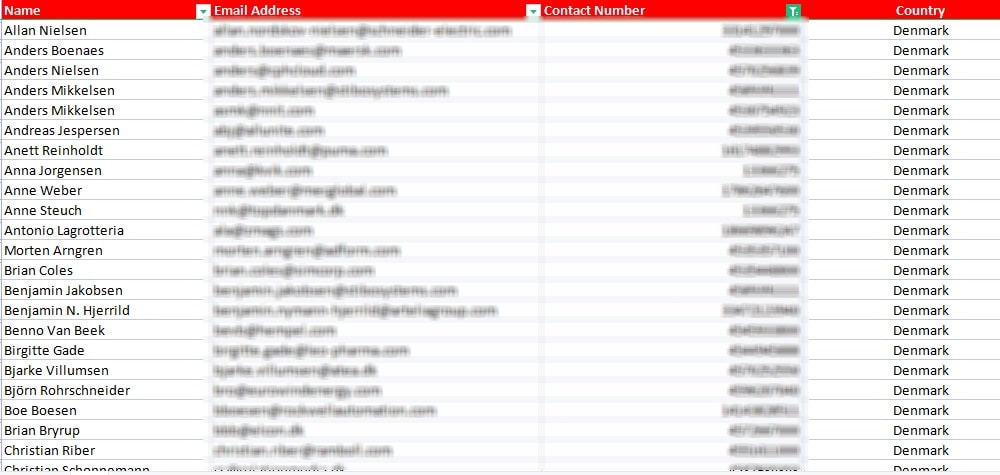

What is a Denmark email list?

A Denmark email list is a curated database of email addresses belonging to individuals and businesses across various states in Denmark, including popular regions like Copenhagen, Aarhus, and Odense. These lists serve as a goldmine for businesses looking to connect with potential clients, partners, or customers. At Emailproleads.com, our Denmark email list is meticulously compiled, ensuring accuracy and relevance to empower your marketing efforts.

Explore Our Comprehensive Denmark Email List for Targeted Marketing Success

HURRY UP! FREE 1 Industry Lead generation Included - Act Now!

Buy Denmark Email List Now & Save $1599

Usually $499 Monthly, Today Only 1-Time $199

HURRY UP! The prices rise in...

Can You Buy Email Lists in Denmark?

Yes, you can buy email lists in Denmark, and Emailproleads.com is your go-to source for high-quality leads. Our Denmark email list provides you with the opportunity to target specific regions and industries, allowing you to tailor your campaigns according to your preferences. Whether you need a Denmark business email list, an email list sorted by job roles, or a consumer email list, we have you covered.

Yes, businesses in Denmark can indeed buy email lists to enhance their marketing strategies. Emailproleads, with its extensive experience and expertise, offers high-quality Denmark email lists. These lists are updated regularly, guaranteeing you the most recent and accurate information. By investing in a Denmark email list, you save valuable time and resources, enabling you to focus on what you do best – growing your business.

Where to Buy Denmark Email Lists?

When it comes to finding reliable sources for Denmark email lists, Emailproleads.com stands out as a trusted partner. We offer a variety of lists, including Denmark company email lists, industry-specific lists, and even consumer email lists. Our data is constantly updated to ensure accuracy, enabling you to reach your desired audience effectively.

When it comes to purchasing Denmark email lists, Emailproleads.com stands out as a reliable and trusted source. With a user-friendly interface and a vast collection of targeted lists, Emailproleads ensures you find the perfect match for your business needs. Whether you are targeting businesses in Copenhagen, Aarhus, or Odense, Emailproleads offers comprehensive lists tailored to specific regions, ensuring your marketing efforts are localized and effective.

Denmark Email Address List

Looking for a list of email addresses in Denmark? Emailproleads.com provides a hassle-free solution. Our Denmark email address list is meticulously curated, guaranteeing you access to authentic and up-to-date email contacts. Moreover, if you're searching for a free list of email addresses in Denmark, our team can assist you in finding the right option tailored to your requirements.

Denmark's Leading Email Addresses: Unleash Your Campaign’s Potential

While there are sources offering free lists of email addresses in Denmark, they often lack accuracy and reliability. Free lists may contain outdated or irrelevant information, leading to ineffective marketing campaigns. Investing in a paid Denmark email address list from Emailproleads guarantees precision and relevance. These lists are constantly updated, ensuring you reach your target audience with precision and efficiency.

Denmark Business Email List by Job Roles

Want to target specific job roles within businesses in Denmark? Our Denmark B2B email list categorized by job roles is the perfect solution. Whether you need contacts in marketing, finance, or IT, our database covers a wide array of professions. Curated to perfection, our B2B lists empower your marketing strategies, ensuring your messages reach the right individuals.

Emailproleads takes customization a step further by offering Denmark B2B email lists categorized by job roles. Whether you are looking to connect with CEOs, marketing managers, or HR professionals, Emailproleads provides targeted lists that streamline your marketing efforts. By reaching the right individuals, you can pitch your services or products more effectively, increasing your conversion rates and overall business growth.

| Job Roles | Phone Number | |

|---|---|---|

| CEO | 50,000 | 50,000 |

| CFO | 40,000 | 40,000 |

| CTO | 35,000 | 35,000 |

| Manager | 30,000 | 30,000 |

| Engineer | 25,000 | 25,000 |

| Analyst | 20,000 | 20,000 |

| Designer | 18,000 | 18,000 |

| Developer | 15,000 | 15,000 |

| HR Manager | 12,000 | 12,000 |

| Marketing Specialist | 10,000 | 10,000 |

| Accountant | 8,000 | 8,000 |

| Sales Representative | 6,000 | 6,000 |

| Customer Support | 4,000 | 4,000 |

| Researcher | 3,000 | 3,000 |

| Legal Counsel | 2,000 | 2,000 |

| IT Support | 1,500 | 1,500 |

| Writer | 1,200 | 1,200 |

| Graphic Designer | 1,000 | 1,000 |

| Public Relations | 800 | 800 |

| Architect | 700 | 700 |

| Total | 300,000 | 300,000 |

Denmark Industries List

Denmark boasts a diverse industrial landscape, including sectors like technology, healthcare, and manufacturing. Our Denmark industries list provides you with access to key decision-makers within these sectors. Whether you're a service provider or a product manufacturer, our industry-specific lists offer a direct line to potential clients and collaborators.

In the heart of Scandinavia lies Denmark, a country known for its thriving business environment. At EmailProleads, we offer a comprehensive Denmark industries list, covering diverse sectors such as technology, healthcare, finance, and more. Our Denmark company email list enables you to reach decision-makers and key executives, propelling your business to new heights.

Denmark Consumer Email List

For businesses focusing on consumers, our Denmark consumer email list is a valuable asset. Accessible and effective, this list allows you to create personalized marketing campaigns targeting consumers in specific regions such as Aalborg, Esbjerg, and Frederiksberg. If you require a Denmark consumer database download, Emailproleads.com delivers accurate data to fuel your marketing endeavors.

Looking to expand your customer base in Denmark? Our Denmark consumer email list is meticulously curated, providing you access to potential clients across various states, including Copenhagen, Aarhus, and Odense. With EmailProleads, you can effortlessly download the Denmark consumer database, empowering your marketing campaigns.

Denmark B2C Email List

Looking to connect with individual consumers in Denmark? Our Denmark B2C email list offers a direct channel to reach your target audience. Whether you're promoting products, services, or special offers, our B2C list provides you with accurate contact details, ensuring your messages land in the right inboxes.

For businesses targeting consumers directly, our Denmark B2C email list offers a goldmine of opportunities. Whether you need a Denmark zip code email list or a free zip code Denmark email list, EmailProleads has you covered. Our data is accurate, reliable, and tailored to meet your specific needs.

Denmark Zip Code Email List

For location-based marketing, our Denmark zip code email list is an invaluable resource. Tailor your campaigns based on specific regions and cities, ensuring your message resonates with the local audience. Our free zip code Denmark email list options enable you to explore the potential of geographically targeted marketing without breaking the bank.

Are you interested in targeting specific regions within Denmark? Our Denmark Zip Code Email List is the ideal solution. This list is organized by zip codes, allowing you to pinpoint your audience with precision. Whether you want to focus on the bustling streets of Copenhagen or the serene landscapes of Jutland, our zip code email list has you covered.

| Location | Phone Number | |

|---|---|---|

| Copenhagen | 296186 | 296186 |

| Aarhus | 423378 | 423378 |

| Odense | 210112 | 210112 |

| Aalborg | 394625 | 394625 |

| Esbjerg | 161490 | 161490 |

| Randers | 64626 | 64626 |

| Kolding | 378783 | 378783 |

| Horsens | 220336 | 220336 |

| Vejle | 372725 | 372725 |

| Roskilde | -5237317 | -5237317 |

| Herning | 546706 | 546706 |

| Hørsholm | 394675 | 394675 |

| Helsingør | 429429 | 429429 |

| Silkeborg | 175265 | 175265 |

| Næstved | 178615 | 178615 |

| Fredericia | 372947 | 372947 |

| Viborg | 537974 | 537974 |

| Køge | 318167 | 318167 |

| Holstebro | 282445 | 282445 |

| Taastrup | 50249 | 50249 |

| Total | 571416 | 571416 |

Buy Denmark Email Database

At Emailproleads.com, we understand the importance of quality data. When you buy Denmark email database from us, you're investing in accuracy, relevance, and reliability. Our database is continuously updated, guaranteeing you access to the most recent and authentic information available in the market. Empower your marketing campaigns with our top-notch Denmark email lists.

Wondering where to buy a high-quality Denmark email database? Look no further. EmailProleads provides authentic and up-to-date data, ensuring your marketing efforts yield maximum results. Our Denmark email leads are carefully curated, allowing you to engage with potential clients and boost your sales.

Denmark Mailing List

A well-targeted mailing list is the cornerstone of successful direct marketing campaigns. Our Denmark mailing list offers you the opportunity to connect with your audience through tailored messages. Reach potential clients, partners, or customers with personalized offers, promotions, and announcements. Our mailing list solutions are designed to enhance your marketing ROI.

Curious about the cost of a mailing list in Denmark? With EmailProleads, you get exceptional value for your investment. Our Denmark mailing list is competitively priced, making it accessible for businesses of all sizes. Reach out to us today to explore our affordable packages and enhance your marketing strategies.

How Much Does a Mailing List Cost in Denmark?

The cost of a mailing list in Denmark varies based on your specific requirements. At Emailproleads.com, we offer competitive pricing tailored to your needs. Whether you need a Denmark business email list or a consumer-focused list, our team provides transparent pricing options, ensuring you get the best value for your investment.

The cost of a mailing list in Denmark varies depending on your specific requirements. Factors such as the type of data (B2B or B2C), the quantity of records, and the level of customization all influence the price. EmailProleads offers competitive pricing options that cater to businesses of all sizes. To get a precise quote for your mailing list, reach out to our team, and we'll be happy to assist you.

Email List Providers in Denmark

When it comes to choosing email list providers in Denmark, Emailproleads.com stands as a trusted name. Our commitment to quality, accuracy, and customer satisfaction sets us apart. We prioritize your success by providing you with the most reliable and effective email lists, empowering your marketing campaigns and helping you achieve your goals.

Searching for the best email list providers in Denmark? Your quest ends here. EmailProleads stands out as a trusted provider, delivering accurate and comprehensive data tailored to your requirements. Access our free email address directory Denmark to kickstart your email marketing campaigns effectively.

Who are the Best Email List Providers in Denmark?

The best email list providers in Denmark are the ones that prioritize accuracy, relevance, and customer satisfaction. Emailproleads.com excels in these areas, ensuring our clients receive top-notch service. Our commitment to quality and our extensive database make us the preferred choice for businesses and marketers seeking reliable Denmark email lists.

The best email list providers in Denmark are those that offer accurate, up-to-date data, compliance with regulations, and excellent customer service. EmailProleads consistently meets these criteria and is recognized as a top provider. Our Denmark email lists are carefully curated to ensure the highest quality and deliverability.

Email Directory Denmark

Need a free email address directory in Denmark? Our Email Directory Denmark service offers you access to a wealth of email addresses, allowing you to expand your network and connect with potential clients or partners. Our directory is constantly updated, ensuring you have access to the latest contacts within various industries and regions.

While EmailProleads offers premium email lists, we also provide free resources to help you kickstart your marketing campaigns. We offer a Denmark Email List Free sample that you can download and explore. This sample allows you to get a glimpse of the quality and depth of our data. If you find it valuable, you can then explore our premium options to access even more data.

Denmark Email List Free

If you're wondering, "How do I get a free Denmark email list?", Emailproleads.com has the solution for you. We offer a Denmark email list free option, allowing you to explore the benefits of our services without any financial commitment. Test our data quality and see the difference our accurate and up-to-date email lists can make in your marketing efforts.

At EmailProleads, we understand the importance of cost-effective solutions. While we offer premium services, our Denmark email list free samples allow you to experience the quality of our data before making a purchase. This ensures you make an informed decision and choose the best email marketing lists for Denmark.

Email Marketing Lists Denmark

Email marketing is a powerful tool for businesses, and our Email Marketing Lists Denmark service provides you with the means to execute successful campaigns. Reach your target audience effectively, promote your products or services, and boost your sales with our meticulously curated email lists. Our data is tailored to your needs, ensuring your messages land in the right inboxes.

Email marketing is a highly effective way to reach your target audience in Denmark. With our Denmark email lists, you can create customized email marketing campaigns that resonate with your recipients. Whether you're promoting products, services, or events, email marketing can help you achieve your goals.

Is email Marketing Legal in Denmark?

Yes, email marketing is legal in Denmark, provided you adhere to the relevant regulations and obtain consent from recipients. At Emailproleads.com, we prioritize compliance and ethical practices. Our email marketing lists for Denmark are curated with legal and ethical considerations in mind, ensuring your campaigns are not only effective but also fully compliant with the law.

Rest assured, email marketing is legal in Denmark as long as you adhere to the country's regulations. With our Denmark contact number list and mobile phone number list, you can engage with your audience ethically and effectively. EmailProleads provides compliant data, ensuring your marketing efforts remain lawful and successful.

Denmark Contact Number List

In addition to email addresses, our Denmark contact number list offers you access to phone numbers, enabling you to diversify your communication channels. Reach out to potential clients or partners via phone calls or SMS, enhancing your chances of making meaningful connections. Our comprehensive contact number lists are updated regularly, guaranteeing accuracy and relevance.

In addition to email addresses, EmailProleads also provides a Denmark Contact Number List. This list includes phone numbers for both businesses and consumers in Denmark, allowing you to connect with your audience through phone calls and text messages.

Denmark Mailing Lists: Your Key to Effective Direct Marketing

DENMARK EMAIL LISTS FAQs

How to address Denmark mail?

When addressing mail in Denmark, include the recipient's name, street address, postal code, and city. For a hassle-free mailing experience, verify the accuracy of recipient details. If you're seeking a reliable provider for Denmark email lists, consider EmailProleads for your business needs.

How are Denmark addresses written?

Denmark addresses typically follow this format: recipient's name, street name and number, postal code, and city. It's crucial to ensure accurate details to avoid delivery issues. For updated and targeted email lists in Denmark, trust EmailProleads for authentic data.

How should be a Denmark email format?

A standard Denmark email format includes a unique username, "@" symbol, domain name, and the top-level domain (e.g., ".dk"). For businesses aiming to enhance their outreach, EmailProleads provides tailored Denmark email lists, ensuring effective communication with your target audience.

What do Denmark addresses look like?

Denmark addresses comprise the recipient's name, street address, postal code, and city. It's essential to have the correct format for successful mail delivery. For businesses seeking accurate and verified email addresses, EmailProleads offers reliable Denmark email lists tailored to your requirements.

List of companies in Denmark with email address?

For a comprehensive list of companies in Denmark with verified email addresses, EmailProleads is your trusted provider. Access a wide array of businesses, ensuring your marketing campaigns reach the right audience effectively.

What are email Denmark etiquette?

In Denmark, email etiquette emphasizes politeness and professionalism. Use formal language, be concise, and respond promptly. For businesses needing targeted email lists to uphold these standards, EmailProleads offers authentic Denmark email databases to enhance your outreach strategies.

What does a Denmark email address look like?

A Denmark email address consists of a unique username, followed by "@" and the domain name (e.g., "[email protected]"). To enhance your marketing efforts with precise and targeted email addresses, trust EmailProleads for authentic Denmark email lists tailored to your business needs.

What email does Denmark use?

Denmark primarily uses email services with the domain ".dk". For businesses aiming to connect with the Danish market, EmailProleads provides accurate and up-to-date Denmark email lists, ensuring your messages reach the right recipients effectively.

Does Denmark use Gmail?

Yes, many individuals and businesses in Denmark use Gmail as their preferred email service provider. If you're looking to target Gmail users in Denmark, EmailProleads can provide you with segmented email lists, enabling focused and successful marketing campaigns.

Does Denmark Post require a signature?

Yes, Denmark Post may require a signature for certain mail deliveries, especially for valuable or important items. It's advisable to check the specific requirements for your package. For businesses seeking reliable email list providers in Denmark, EmailProleads ensures signature-quality service, delivering accurate and verified email addresses.

Why does Denmark use email instead of SMS?

Denmark, like many countries, relies on email for its efficiency, formality, and ease of communication. Emails allow for detailed information exchange, making them preferable for various professional and personal interactions. For businesses wanting to tap into Denmark's email culture, Emailproleads offers tailored Denmark email lists, ensuring your messages are well-received.

Denmark Email List Summary

In summary, Emailproleads.com is your one-stop solution for all your Denmark email list needs. Whether you require a Denmark business email list, a consumer-focused database, or industry-specific contacts, our services are tailored to your requirements. With our accurate and up-to-date email lists, you can supercharge your marketing campaigns, connect with your target audience, and achieve remarkable results.

When you choose Emailproleads.com, you're choosing accuracy, reliability, and excellence in service. Don't miss out on the opportunity to elevate your marketing strategies. Buy Denmark email list, Denmark business email list, Denmark email address list, and Denmark mailing list from us today, and experience the difference of working with a leading provider in the industry. Your success starts with the right connections, and Emailproleads.com is here to help you make those connections count.

To get started, visit EmailProleads.com and explore our range of Denmark email lists. Reach out to our team for personalized assistance and discover how our data can propel your marketing strategies to new heights.